Thanks to Ian Ferguson for this guest blog

Price to Win analyst Ian Ferguson discusses practices and trends in aerospace and defence software development....

Norm Augustine used his page in Aviation Week’s 100th Anniversary edition to skewer Aerospace Engineers for encouraging software’s contribution to the inexorable cost growth trends he famously described in “Augustine’s Laws” in 1984. A few weeks later, Sherman Mullin, former President of Lockheed’s Skunk Works described software development as “out of control, increasing exponentially and not addressed vigorously and objectively.” Aerospace software development productivity has come up repeatedly in this author’s consulting experience, typically from Tier 1 Original Equipment Manufacturers (OEMs) looking to benchmark performance and identify best practice. Clearly, the older generation remain in touch with current preoccupations. But nor has the industry stood idle – what has changed, and can digital development costs be brought under control?

Research Summary

Through 2016-17, Advisory: Ian H Ferguson researched practices and trends in Aerospace and Defence software development. This built on work conducted for the author’s 2013 Whitepaper on Aerospace Engineering Productivity 1 conducted in conjunction with Aviation Week and Space Technology. The research comprised face-to-face and telephone interviews with Senior Engineering Management, representing 30-50% of the estimated global population of Aerospace and Defence software engineers (the range reflects the elastic definition of defence.) The sample is biased towards high-criticality applications (~70% engaged in DAL A-B development rather than the 50% typically estimated for avionics) and towards aerospace rather than defence. Additionally, the work builds on Consultancy engagements completed by the author.

More, More ! Faster, Faster !

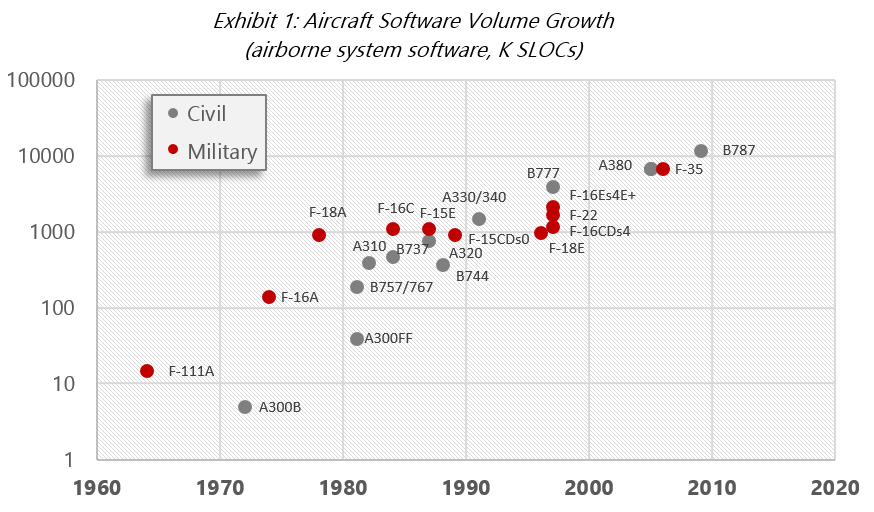

More sensors, more data and more data exchange: for networked combat, for passenger Wi-Fi, or for operations optimisation, whether for maintenance & support contracts or shared weather data. Greater complexity, more failure modes… The exponential growth trend looks set to continue.

In practice, aerospace struggles with the mismatch between long development/support cycles and consumer-driven evanescence of digital hardware. Since the millennium, Wi-Fi, touchscreens, the smartphone, multi-core and graphics processors have become ubiquitous. In the same period, the number of major programmes on which a mid-career Aerospace Engineer might have worked has fallen from 4-6 to one or two, while new avionics architectures have arrived and supply chain relationships have altered dramatically.

Delegation of Systems Integration responsibility was noted as a significant performance impediment in 2013. It remains a problem: not only where personnel lack experience, but also where OEM knowhow is shallow. While some emerging airframers are tackling technically ambitious programmes, even some established players have lost the ability to write and manage specifications, either as a generation of experienced Engineers leave the workforce or as a result of decades of supply chain restructuring. The effect is pernicious: suppliers now hold implicit responsibility for specification-writing and integration, but as risk-sharing partners their compensation is at best indirect and delivery dates remain fixed while kick-off dates slip. Worse, when integration conflicts arise between suppliers, the OEM is often unable to arbitrate effectively.

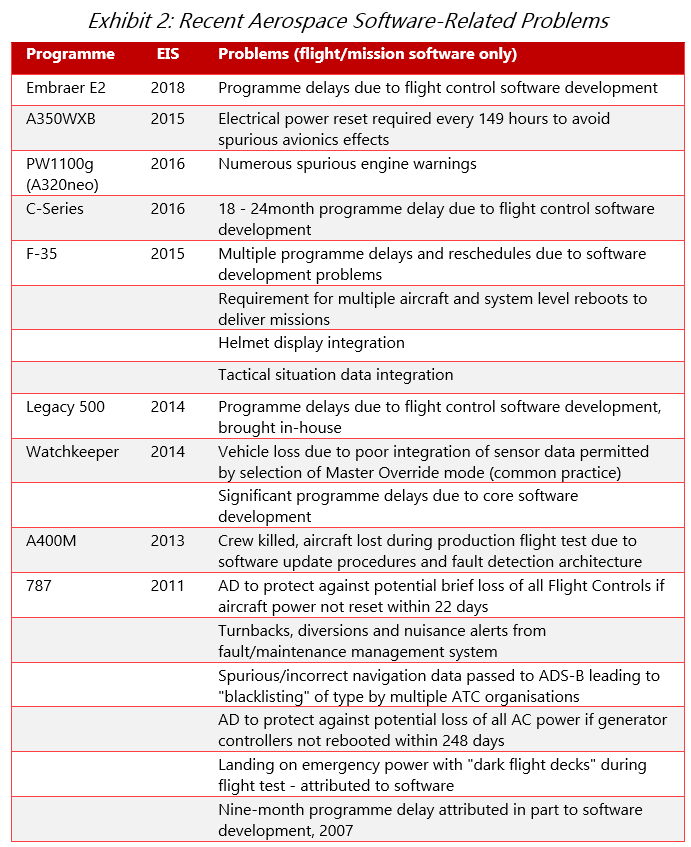

Late But Safe

In 2013, regulatory rigmarole & certification caprice took second place as cause of delivery delay and third for increased development costs and timescales (Expectations of increased functionality and delegation of integration were considered more problematic.) The hope in 2013 was that the then-newly-released DO-178C might somehow reduce the burden. It hasn’t happened: instead, scrutiny has grown, often significantly. The style of complaints remains the same: “they get an easier time there in … than we do here” or “our regulator can’t make up their mind.” On the other hand, practitioners do quietly admit that DO-178C is a step forward from “B”, and the voluntary shift of a number of military programmes to the civilian processes is noteworthy: it’s not only a vote of confidence in the process’s effectiveness, but reflects a structural change that regulators also recognise. The challenge has shifted: from developing software that matches requirements, to ensuring that those requirements accurately reflect human intentions. Safety “escapes” are now increasingly at the design/requirements level: the hope is for regulatory focus to draw back from code development processes to System Safety Engineering.

Nor should progress in smaller niches be ignored. April 2016 saw the release of OpenGL SC 2.0, giving airborne display developers access to the power of modern graphics processing in a safety-critical environment: certification almost off-the-shelf, reducing CPU load and opening up previously unfeasible display possibilities: such as those advertised by Ensco’s July 2017 partnership with CoreAVI. Also in 2016 the rewrite of FAR Part 23 has the potential to unblock a pipeline of avionics innovation in smaller aircraft, allowing benefits of flight experience and larger markets to flow up to larger aircraft in the longer term.

Justifiable Abstraction

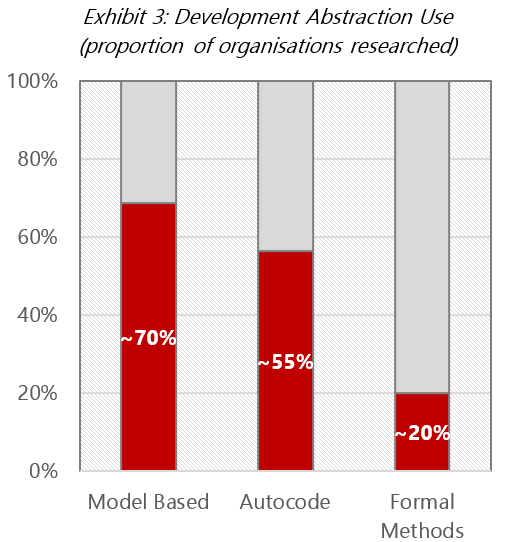

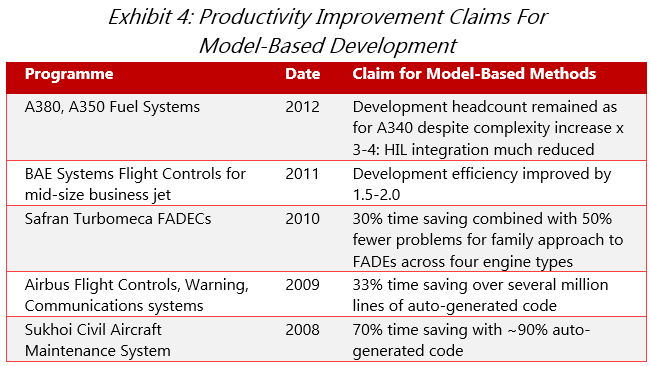

Long ago, at an avionics developer far, far away, 2 statecharts were invented and Model-Based development was born. By 1999, auto-generated DAL A code had achieved certification: in 2006 Rockwell Collins ran a competitive selection between the two major Model-Based tool vendors, and auto-generated DAL A code received certification in the Americas (for the PW535B & E FADECs.) The subsequent decade has seen dramatic claims – not solely from tool vendors – for productivity increments delivered by Model-Based software development. To what extent are they (and those for its cousin, Formal Methods) delivering in the context of real aerospace systems development?

The subject is broad. One standards working group found that its members held a dozen different understandings of Model-Based Development, with different approaches and little common ground: a high barrier for anyone trying to identify the best gains for their own organisations. At industry level, the big wins are when Systems Engineering can be unshackled from digital development considerations, primarily but not exclusively through automatic code generation. At one extreme, we found sustained long-term efforts at the point of delivering something akin to a new way of working: the comment from one Airframe OEM’s Head of Software Development was “I retire in five years, by which time my aim is to have removed software considerations entirely from our Systems Engineering activity.” More typically we found widespread satisfaction, with productivity claims translating into end-to-end project delivery performance.

An important subset of applications is ill-suited to automatic code generation. There can still be value in Model-Based approaches: the comment that “if you ask a Systems Engineer to write a requirement, you’ll get about 20% of what (s)he knows – if you ask them to write a test for the requirement, you’ll get about 80%” holds the clue. Model-Based approaches are powerful at identifying requirement lapses and weak design, and in getting test generation started early (while retaining the necessary independence of the test process.)

It’s not all roses. Machine-generated code is not only inefficient, but notoriously hard to review and support over the longer term. Nor are Model-Based approaches a panacea: there is a well-documented temptation to try to model everything, with workarounds, handwritten code modifications and duplication of effort that can outweigh productivity gains: even before discussions begin with skeptical Certification authorities. Some high-performing organisations have found it worthwhile to establish multiple alternative standard Model-Based workflows, each suited to different levels of project complexity and formality.

From Formal Methods, few report noteworthy productivity benefits; the succinct summary was that “we see no benefit until the Engineer works on their second programme – at present you almost need a PhD to use it.” A number of big players are trying hard, but efforts typically remain at the University/research stage.

Much of test automation is at a similarly early stage of development. Consensus is that there is value to be unlocked in test generation: but there’s little or no aerospace experience with the small number of third-party tools available, and many firms balk at the high cost of development/test tool qualification kits from the major Model-Based tool vendors (who follow a printer/ink pricing model in this area.) The goals remain ambitious and efforts fragmented; most report their best productivity improvements from focusing on test process automation.

Human Performance

Models may be widespread, but Software Lines of Code (SLOCs) still rule: for many, the Source Line Of Code remains, despite its well-known deficiencies, the measure of both project size and of day-to-day productivity. In 2013, we identified a perverse effect: organisations placing heavy reliance on SLOC/hr as a productivity measure were rarely those winning bids and delivering strong cost/delivery performance. By 2017, although we found a shift away from “chasing the number” towards Earned Value methods for project delivery, no consensus was found even among high-performing or large, experienced players on effective productivity metrics.

What is clear is that performance on the order of 1.0 developed SLOC per hour for DAL A code remains unchanged. In terms of hourly lines of code, Model-Based methods may deliver up to five to ten times as much (thereby inflating the code volumes seen in Exhibit 1) but for end-to-end project delivery, performance improvements typically net out to the 20-50% range.

Can this limit be broken? If so, it looks unlikely that there’s a magic bullet hidden in tools or development/ test workflows. We found a bewildering variety of approaches, with an absence of consensus even on open-source tool use: some embrace their value while others are wary of vendor lock-in and support risks. Home-build code is ubiquitous, ranging in scale from “glue” used to support workflow automation right up to proprietary auto-coding Model-Based development environments.

There may be a new frontier open to aerospace: recruitment of exceptional humans, following such players as SAP, Microsoft, JP Morgan Chase, Hewlett Packard and others in pursuing employment of neurodiverse individuals (typically with an autistic spectrum diagnosis.) Productivity increments of 40-45% above baseline have been reported (for projects at the Australian Department of Defence) coupled with exceptional task and employer loyalty. Better yet, risks are low: turnkey recruitment support is available for a number as few as 10 Full Time Equivalents (FTEs).

The First Rule of "Agile" Club

“33% of this organisation are fanatical about Agile, 33% ambivalent, 33% hate it” – hardly an endorsement, especially for a group of development approaches that doesn’t even have a definition (it has a Manifesto.) Nonetheless, we found that around 50% of organisations are deploying Agile practices in some form, and the proportion is growing: surprising, given the reported “allergic” reactions of Certification authorities, and the fact that hardware is frequently a pacing item for aerospace projects. (Many customers have no interest in receiving early development software versions – a characteristic response might be “come back in six months, we’ll have something for you to run it on.”) Clearly, adoption rates suggest that “iterative”, “incremental” (or indeed downright euphemistic) development practices bring value on their own merits: how? And what benefit is there in daily code release where an Engineer is producing 8-10 lines/day?

Claims for some Agile methods are as startling as those made for Model-Based Development – but in very different terms. Productivity is not multiplied – it is focused. Many report early identification of problem areas, early specification/requirements maturity, (especially valuable with inexperienced Customers) rapid establishment of Customer confidence and unprecedented delivery forecasting accuracy.

On the other hand, not every culture or hierarchy welcomes Agile. Neither developers nor their management can hide when productivity impediments are identified: the relentless planning heartbeat shines light where it may not have been seen before, and if progress is not visible, the culture shift towards empowered teams setting and taking responsibility for their own priorities is seriously inhibited. There is consensus that once a team has adopted Agile practices, there is no going back: the pipeline of work must be kept full. Entry barriers are high: the training input is significant and must go beyond development staff themselves. The “ceremonies” - Scrums® or KanBan stand-ups, Sprints or Retrospectives – may masquerade as overhead, but contain crucial internal logic which is easily disrupted. Experience has shown that that there is no place for management, however senior, to grandstand at a Sprint meeting: nor does Customer presence facilitate fully frank Scrum® discussion.

Once mature, however, learning curves fall dramatically, cross-team transfers are smoother and new toolchain/ process pipecleaning is greatly eased. “Integration hell” (in which separately-developed modules won’t play together) is much rarer. The appropriate “heartbeat” for code release frequency varies widely: some players develop high-fidelity software with near continuous integration and teamwide “information radiators” of progress, technical debt and other performance parameters. Others use a lighter implementation, with 3-6 monthly code releases, but an emphasis on scope maturity, early test development and robust earned value/estimate-to-complete assessment. It may be that under Agile, lines of code are not written much faster, but the right lines are written. Let the last word be with the VP of Product Engineering for a major Systems OEM – “The Customer wants stable hardware in their lab even before the Requirements are mature. Agile methods are really good for this situation.”

Made In India - For Many Years

The 2013 study exploded any myth of easy cost reductions through labour arbitrage in aerospace Engineering. This will not surprise the biggest players in cockpit avionics, seven of the eight 3 having over a decade’s experience of substantial outsourcing. The assessment then was that productivity equivalent to home base should be attainable within 12-36 months of facility set-up, but net payback including management overhead was typically a 4-10 year journey. What have recent events shown? GE and Honeywell appear to have stuck firm to their long-standing policies of substantial globalisation. Rockwell Collins was a relative latecomer to India (2008) but significantly expanded operations beyond the ~630 FTE Hyderabad Design Centre to an expected 100 more in Bangalore: in line with the plans of another Tier 1 Systems supplier who intends to transition their Indian commitment from a low-cost test services supplier to a majority-owned software development centre. On the other hand, Thales has brought its Indian software development shareholding in line with its avionics development interests in Samtel and Baharat, by reducing it to 26%.

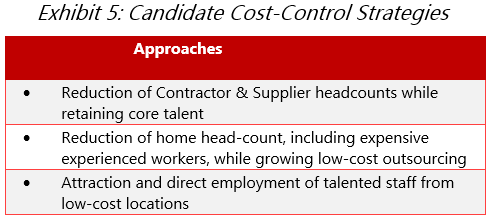

Not all were looking for low cost: the hunt for talent is still reported as a key driver for outsourcing initiatives. Retention strategies remain crucial: there are aerospace projects in which the level of specialisation is such that individual Engineers simply face growing piles of problem reports, and many lose visibility of overall project progress. The risk here is that fast-moving, high-reward consumer projects outweigh any emotional reward that’s sometimes associated with aerospace. The same problems contribute to staff turnover in outsourced facilities, the majority of which are in India and still tackle only testing and verification. Some Tier 1 suppliers are trying to tackle these challenges by recruiting and employing talented staff from low-cost locations back into their home bases, and inspiring motivation by establishing clear Engineering Centre of Excellence policies.

Don't Write It Again, Sam

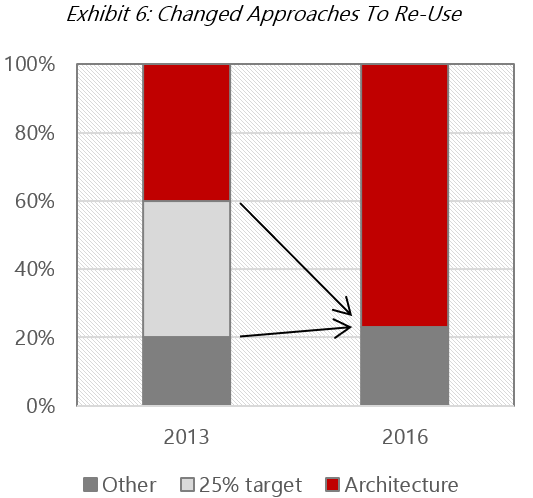

Quite recently, a widespread metric for aerospace software development was 25% code re-use. This was likely once a good idea: but 2013’s research uncovered stories of various perverse outcomes. By 2017 the approach had more-or-less disappeared in favour of “strategic architectures” and occasional embrace of Product Line Engineering. The goal is delivery of long-term re-use potential for software modules at all levels of abstraction, from high level application all the way down to machine level.

In principle, there is no better way. How better to grow productivity than pull the parts off the shelf, clip them together and populate the configuration table for whatever the airframe wizards come up with next? After all, how many times need the industry re-invent (for example) flight control algorithms? Core flight physics models are now ubiquitous off-the-shelf: the drivers of truly novel functionality are not frequent – (new propulsion, configurations, stealth requirements, perhaps in due course adaptive controls.) Could software modules become sufficiently commoditised that we reach “Peak SLOC”?

The Future Airborne Capability Environment was formed in 2010 with hopes to lead such a journey. It’s a voluntary consensus standards body which aims to improve cost and delivery performance for military avionics through facilitation of software and hardware re-use. 2015 saw the joining of the US Air Force as a full sponsor – a major step, for a US Navy initiative – and Rockwell Collins received the first FACE conformance certification in 2016 for an RNP/RNAV FMS. An eventual move into commercial avionics has not been discounted.

At the working level, re-use remains hard to do, with some big names stumbling. It’s not enough to drop modules into a repository: the challenge is to document your assets sufficiently well that Bid Teams are willing to rely on them, and that Engineers will overcome their natural “Not Invented Here” response (“integrating that looks hard and the person who wrote it has left – easier to write my own…”) Mundane, but major – such initiatives easily grow into science projects. The claim 4 that “we have made future civil and military programs more affordable by resetting the avionics cost curve and doing away with escalating software development costs" seems extravagant, even in the speaker’s context of winning 777X common core based on 787.

Clearly the stakes are high. (The risks too: it’s easy to design a strategic architecture in which a minor high-criticality requirement pushes DAL A right across the design, or late-lifecycle changes ripple across a whole product family.) Thales’s acquisition of Sysgo (2012) might barely attract attention at only ~80 FTEs, but gives the company privileged access to a keystone technology: a flexible, competitive, certificated RTOS (PikeOS) with significant aerospace growth potential (and penetration – Airbus, Embraer, Rockwell Collins, Meggitt, MBDA, KMW.) Five years later, in the era of delivery, cost control and “No New Programmes”, it is questionable how much appetite there is amongst Tier 1 suppliers for such investments which, in the relatively small aerospace software development context, are truly strategic. Perhaps there are some winners out there: our research identified a small population of Tier 1 suppliers who’d established a product family architecture 8-12 years ago, with by now typically ~50% of their products benefiting from the strategic re-use approach pioneered by Honeywell (Primus Epic) and Rockwell Collins (ProLine) in flight deck architectures around the turn of the millennium.

Conclusions

Digital systems functionality and complexity will keep growing. We’re already pushing at the limits of human performance and talent availability… and there seem to be no truly strategic responses or initiatives on the horizon. Against this background, what ideas are crucial for Executive Management planning aerospace software development for the mid-term? And what can we tell Norm?

The Secure Airborne Wi-Fi Toaster?

Prepare for two major changes. There’s already been too much talk (Consultants are guilty…) about the aerospace Internet of Things, and the applications of Big Data: but it’s happening, with any doubt removed by the UTAS/Rockwell Collins/B/E and Safran/Zodiac acquisitions. Whether status-reporting galley inserts or weather radars using Clouds to share precipitation data, the Things will all require more software. Getting the resulting data onto and off the jet has been a major impediment (an ugly choice between fragmentary airfield Wi-Fi, costly/low-bandwidth HF/Satcomms options and even manual methods) but these barriers are falling fast.

As access becomes easier, so do assaults. The world’s largest oil producer, Saudi Aramco, suffered two weeks major disruption and infection of as many as 30,000 computers in 2012 by the Shamoon virus, purportedly developed in Iran. While the ability of lone hackers to access vehicle systems has been over-reported, there are certainly multiple state actors with the capability - and perhaps incentive - to cause major infrastructure disruption. Aerospace safety process experience may have some value in delivering security: but although safety processes sometimes consider incompetence, malevolence is beyond them. Alongside dramatic growth in software volumes, we face a new layer of overhead beyond the scale of retrofitting fortress-like cockpit doors.

Motown Magic?

Aerospace software development’s structural challenges are rarely mentioned. Small scale and high fragmentation is a disadvantage for all participants: it inhibits transfer ability of staff and knowledge, extends learning curves and defers breakeven for productivity tools and initiatives. With a huge variety of tools and approaches, the services market struggles with efficiency and continuity: witness the numerous subscale development firms that split their activities between nuclear, medical device, railway and aerospace projects.

Automotive digital technologies have already entered Aerospace: witness the Boeing and Airbus applications of the Robert Bosch CANbus. What’s changed is that the Automotive industry is now tackling safety-critical functions in a complex environment of the sort with which aerospace has long familiarity. With scale around ten times that of aerospace, and much shorter development cycles, the environment for developing digital systems COTS tools and productivity initiatives is that much more attractive: the analogy is particularly strong in testing approaches. A closer relationship may prove productive for both sides.

Recommendations

Meanwhile, how can Executive Management improve productivity, cost and delivery performance? The temptation is always to look at the neighbours and the adjacent sectors; perhaps there’s something out there we’ve missed, something that’ll crack our problems. Motown may help, but what should be clear from this research is that there are no magic bullets, no drop-in universally-applicable productivity improvement techniques, no easy COTS tools. What’s to be done?

“Do, or do not – there is no try”: Yoda’s advice may be particularly apposite for the major productivity improvement approaches noted here. Their risks are high: of wasted resources, or of messing up what you’ve already got. These are organisation-level initiatives. Payback periods are long, training inputs and other overheads may be high and recurring, and there is potential for discomfiting culture change. The comment from one high-performing organisation – “We view all these productivity improvement initiatives as strategic” – accurately describes the challenge: do not underestimate the costs, scale and commitment required to implement such changes: or the value that they can deliver.

Meanwhile, “Stay calm and implement.” Software is emphatically an Engineered product: dependent on tools and processes. This research found nothing resembling the universal panacea that some of my customers hoped for. It did reveal a number of examples in which ill-chosen tools or suboptimal processes (sometimes too much, sometimes too little) proved to be significant inhibitors of productivity. The challenge is to identify the blockers and prescribe effective remedies: across the full scope of a software development workflow. Multiple incremental improvements are rarely glamorous, but much is typically possible without invoking a full Kaizen approach.

Finally, share the big picture with development Engineers. Not only is there obvious value to motivation and retention, but it is also critical to the success of any process improvement initiative. Despite the knowledge, education and intelligence typical in this area of Aerospace Engineering, this research has revealed distressingly frequent examples of the Law of Unintended Consequences. Above all others, software development is arguably the aerospace engineering discipline in which results are driven most directly by people management. As well as the humility to recognise and fix process mis-steps, it requires exceptional leadership to tackle the challenge of keeping smart humans productive and effective, writing the right code, first time every time.

1 Ferguson, Ian, 2014 “The World Is Not Flat: Aerospace Engineering Gears Up For New Challenges”, while employed at ICF International, icfi.com/aviation

2 Lavi fighter development at IAI in 1983, according to David Harel and others – statecharts eventually fed via I-Logix and Telelogic AB into the IBM Rational Rhapsody toolsuite

3 Garmin claims benefits from a policy of vertical integration for aerospace products.

4 Alan Caslavka, President GE Aviation Avionics & Digital Systems

Ian Ferguson is a Price to Win analyst with Amplio. He has over many years experiencein aerospace and specialises in helicopter upgrades and commercial aircraft.